4.1. Functional properties of ACAS-Xu¶

ACAS-Xu stands for Aircraft Collision Avoidance System. Introduced for instance in [Manfredi2016], it is a specification of a program which aim to output signals for an aircraft in a situation where there is a potential for collision. In the rest of this tutorial, we will use the flavour ACAS-Xu defined in [Katz2017], where the authors aim to verify a neural network implementing part of the ACAS-Xu specification. Its low dimensionality and well-defined semantics make it a de facto benchmark for machine learning verification.

4.1.1. Use case presentation¶

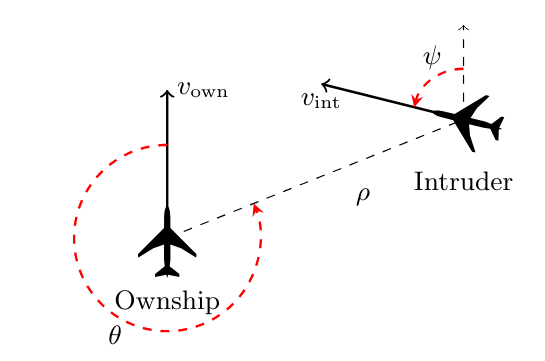

The system considers a 2D plane with two entities: the monitored airplane (the “ownship”) and an incoming airplane (the “intruder”).

In the original implementation, the system state has seven inputs:

\(v_{own}\): speed of ownship

\(v_{int}\): speed of intruder

\(\rho\): distance from ownship to intruder

\(\theta\): angle to intruder relative to ownship heading direction

\(\psi\): heading angle of intruder relative to ownship heading direction

\(\tau\): time until vertical separation

\(a_{prev}\): previous advisory

It has five outputs, that correspond to the different direction advisories the system can give:

\(COC\): Clear Of Conflict

\(WL\): Weak Left

\(SL\): Strong Left

\(WR\): Weak Right

\(SR\): Strong Right

In the original paper, the authors consider \(45\) neural networks, for several values of \(\tau\) and \(a_{prev}\), that operate on five inputs only while maintaining the same number of outputs. We will consider five-inputs networks in the remaining of this example.

4.1.2. Properties¶

There are several functional properties one may want to verify on this system, for instance:

Guarantee that the system will never output COC advisory when the intruder is nearby,

Guarantee that the system will never output an advisory that may result in a collision,

Guarantee that the system will not output a strong advisory where a weak variant would be enough.

Authors of [Katz2017] propose ten properties to verify. We will reproduce the first and third properties here, and then show how to use CAISAR for verifying whether a given neural network respects them.

Property \(\phi_1\)

Definition. If the intruder is distant and is significantly slower than the ownship, the score of a COC advisory will always be below a certain fixed threshold.

Input constraints:

\(\rho \geq 55947.691\),

\(v_{own} \geq 1145\),

\(v_{int} \leq 60\).

Desired output property:

\(COC \leq 1500\).

Property \(\phi_3\)

Definition. If the intruder is directly ahead and is moving towards the ownship, the score for COC will not be minimal.

Input constraints:

\(1500 \leq \rho \leq 1800\),

\(-0.06 \leq \theta \leq 0.06\),

\(\psi \geq 3.10\),

\(v_{own} \geq 980\),

\(v_{int} \geq 960\).

Desired output property:

\(COC\) is not the minimal score.

4.1.2.1. Normalization Process¶

Properties define arithmetic constraints over numerical value coming from aeronautical physics. However, neural networks typically operate on normalized values, where original quantities are typically rescaled to a fixed range, such as \([0,1]\) or \([-1,1]\). As such, original authors verify properties on normalized values.

CAISAR can formally specify this normalization process, bridging the gap between properties expressed on expert-domain values and their counterparts on the values actually used by neural networks.

4.1.3. Modelling the problem using WhyML¶

4.1.3.1. Importing symbols¶

The first step for verifying anything with CAISAR is to write a specification file that describe the problem to verify as a so-called theory. A theory can be seen as a namespace inside which are defined logical terms, formulas and verification goals. In particular, being based on the Why3 platform for deductive program verification, CAISAR supports the Why3 specification language WhyML, and inherits the Why3 standard library of logical theories (integer, float and real arithmetic, etc.) and basic programming data structures (arrays, queues, hash tables, etc.).

Additionnaly, CAISAR extends WhyML by providing several builtins predicates and function symbols. The full extend of those additions is documented in _built-in:. This example will use some of those extensions to display the capabilities of CAISAR; the wording “WhyML extensions” will be used when this happens.

Let us model the property \(\phi_1\) defined earlier. We will define

a global theory ACASXU, and perform some basic imports. Each theory must

end by the end keyword.

theory ACASXU

use ieee_float.Float64

use int.Int

end

We will need to write down some numerical values. As of now, CAISAR allows

writing values using floating-point arithmetic and integers only.

Why3 defines a float type

and the relevant arithmetic operations according to the IEEE 754 floating-point

standard in a theory, astutely called ieee_float. Specifically, we will

import the Float64 sub-theory, that defines everything we need for 64-bit

precision floating-point numbers, and the Int sub-theory for integer arithmetic.

We thus import them in our theory using the

use keyword. This currently requires to write exact IEEE 754 floating point

values in the specification file, which is understandably a setback in terms of

usability, but have the advantage of making the specification unambiguous.

We would like to verify our property given a certain neural network. To do this, CAISAR provide WhyML extensions to recognize and apply neural networks in ONNX and NNet formats on vector inputs. Given a file of such formats, CAISAR is able to provide the following:

a logical symbol of type

model, built using theread_modelfunction, of typestring -> model. The argument is the path to the machine learning model file;a function symbol

@@of typemodel -> vector 'a -> vector 'a, that returns the output of the application of the neural network to a given input;types and predicates to manipulate inputs vectors;

The full reference for those WhyML extensions is available under the

stdlib/caisar/types.mlw and

stdlib/caisar/model.mlw

files. To create a logical symbol for a neural network located in

examples/nets/onnx/ACASXU_1_1.onnx, we can import the relevant theories in our file

and use the read_model function symbol like this:

use caisar.types.Vector

use caisar.model.Model

type input = vector t

type output = vector t

constant nn_1_1: model = read_model "nets/onnx/ACASXU_1_1.onnx"

We will define additional constants to write more concise specifications. For instance, we can define the meaning of input and output vector indices.

let constant distance_to_intruder: int = 0

let constant angle_to_intruder: int = 1

let constant intruder_heading: int = 2

let constant speed: int = 3

let constant intruder_speed: int = 4

type action = int

let constant clear_of_conflict: action = 0

let constant weak_left: action = 1

let constant weak_right: action = 2

let constant strong_left: action = 3

let constant strong_right: action = 4

We can also define a predicate that encodes the admissible range of values. As one vector coordinate is a radian angle, we will define the \(\pi\) constant, in IEEE Float64 compliant format.

let constant pi: t = 3.141592653589793115997963468544185161590576171875000

predicate valid_input (i: input) =

(0.0:t) .<= i[distance_to_intruder] .<= (60760.0:t)

/\ .- pi .<= i[angle_to_intruder] .<= pi

/\ .- pi .<= i[intruder_heading] .<= pi

/\ (100.0:t) .<= i[speed] .<= (1200.0:t)

/\ (0.0:t) .<= i[intruder_speed] .<= (1200.0:t)

Finally, we can write predicates that ensure that it is not possible to access invalid indices in the output vector. From there, we can write two predicates that are true when the predicted action has the minimal score (resp. the maximal score).

predicate valid_action (a: action) =

clear_of_conflict <= a <= strong_right

predicate advises_min_score (o: output) (a: action) =

valid_action a ->

forall b: action. valid_action b -> a <> b -> o[a] .<= o[b]

predicate advises_max_score (o: output) (a: action) =

valid_action a ->

forall b: action. valid_action b -> a <> b -> o[a] .>= o[b]

4.1.3.2. Specifying the normalization process¶

The domain-expert properties constraint values to live in the realm of real physics, with values in really large intervals (for instance, aircraft speeds in m/s). Neural networks, however, require normalized values with a relatively small variance. As such, most of machine learning programs require a normalization step prior to any computation. Normalization then change the expected inputs (and outputs) of the program. For a specification to stay faithful, it must then model the normalization step and lift back values from the normalized values space to the original specification value space.

More formally, a specification \(\Phi\) defines pre-conditions \(P(\tilde{x})\) (resp. post-conditions \(Q(\tilde{y})\)) in the input (resp. output) specification space \(\Sigma_i\) (resp. \(\Sigma_o\)). A program computes values from an input space to an output space \(f : x \in \mathcal{X} \mapsto y \in \mathcal{Y}\). A normalization (resp. denormalization) is a function \(n : \tilde{x} \in \Sigma_i \mapsto \mathcal{X}\) (resp. \(d : \mathcal{Y} \mapsto \tilde{y} \in \Sigma_o\)). As such, the specification \(\Phi\) yields properties on the following computation: \(d \circ f \circ n\). We will define these normalization and denormalization steps in CAISAR.

In this setting, given a mean \(\mu \in \mathbb{R}\) and a standard deviation \(\sigma \in \mathbb{R}\), normalization is the operation \(\tilde{x} = \frac{x - \mu}{\sigma}\). The denormalization is the inverse operation \(x = \tilde{x}*\sigma + \mu\). We will define those operations in WhyML.

let function normalize_t (i: t) (mean: t) (range: t) : t =

(i .- mean) ./ range

let function denormalize_t (i: t) (mean: t) (range: t) : t =

(i .* range) .+ mean

Note that those operations are for floating-point scalar values. To map the normalization function to an input vector, we must map the function to each of the vector’s coordinates.

Warning

For this specific benchmark, we refer to the IEEE 754 floating-point representation of the normalized values the original authors provide in their repository.

let function normalize_by_index (idx: int) (t: t) : t =

if idx = distance_to_intruder then normalize_t t (19791.0:t) (60261.0:t)

else if idx = angle_to_intruder then normalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = intruder_heading then normalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = speed then normalize_t t (650.0:t) (1100.0:t)

else if idx = intruder_speed then normalize_t t (600.0:t) (1200.0:t)

else t

let function normalize_input (i: input) : input =

Vector.mapi i normalize_by_index

let function denormalize_by_index (idx: int) (t: t) : t =

if idx = distance_to_intruder then denormalize_t t (19791.0:t) (60261.0:t)

else if idx = angle_to_intruder then denormalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = intruder_heading then denormalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = speed then denormalize_t t (650.0:t) (1100.0:t)

else if idx = intruder_speed then denormalize_t t (600.0:t) (1200.0:t)

else t

let function denormalize_input (i: input) : input =

Vector.mapi i denormalize_by_index

let function normalize_output_t (o: t) : t =

normalize_t o (7.51888402010059753166615337249822914600372314453125:t) (373.9499200000000200816430151462554931640625:t)

4.1.3.3. Writing the verification goal¶

Now is the time to define our verification goal. We first need to model the inputs of the neural network \(\rho, \theta, \psi, v_{own}, v_{int}\) to the range of floating-point values each may take.

predicate intruder_distant_and_slow (i: input) =

i[distance_to_intruder] .>= (55947.6909999999988940544426441192626953125:t)

/\ i[speed] .>= (1145.0:t)

/\ i[intruder_speed] .<= (60.0:t)

Finally, we can actually write the verification goal, P1, for

property \(\phi_1\) on neural network \(N_{1,1}\).

goal P1:

forall i: input.

has_length i 5 ->

let j = denormalize_input i in

valid_input j /\ intruder_distant_and_slow j ->

let o = (nn @@ i)[clear_of_conflict] in

(denormalize_output_t o) .<= (1500.0:t)

We must define the result of the application of nn on the inputs.

The built-in function @@ serves this purpose: given a neural network model

and an input vector x, @@ return the vector that is the result of the

application of model on x. Note that thanks to type polymorphism,

@@ can be used to describe a variety of input vectors, including floating

points, integers, or strings. We can finally define the output constraint we

want to enforce on the first coordinate of the output vector that we use to

model the advisory COC. We use the WhyML extension predicate has_length to

further check that our inputs are of valid length.

The final WhyML specification of the \(\phi_1\) property looks like this:

theory ACASXU

use ieee_float.Float64

use int.Int

use caisar.types.Vector

use caisar.model.Model

type input = vector t

let constant distance_to_intruder: int = 0

let constant angle_to_intruder: int = 1

let constant intruder_heading: int = 2

let constant speed: int = 3

let constant intruder_speed: int = 4

type action = int

let constant clear_of_conflict: action = 0

let constant weak_left: action = 1

let constant weak_right: action = 2

let constant strong_left: action = 3

let constant strong_right: action = 4

type output = vector t

let constant pi: t = 3.141592653589793115997963468544185161590576171875000

let function denormalize_t (i: t) (mean: t) (range: t) : t =

(i .* range) .+ mean

let function denormalize_by_index (idx: int) (t: t) : t =

if idx = distance_to_intruder then denormalize_t t (19791.0:t) (60261.0:t)

else if idx = angle_to_intruder then denormalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = intruder_heading then denormalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = speed then denormalize_t t (650.0:t) (1100.0:t)

else if idx = intruder_speed then denormalize_t t (600.0:t) (1200.0:t)

else t

let function denormalize_input (i: input) : input =

Vector.mapi i denormalize_by_index

let function denormalize_output_t (o: t) : t =

denormalize_t o (7.51888402010059753166615337249822914600372314453125:t) (373.9499200000000200816430151462554931640625:t)

let function normalize_t (i: t) (mean: t) (range: t) : t =

(i .- mean) ./ range

let function normalize_by_index (idx: int) (t: t) : t =

if idx = distance_to_intruder then normalize_t t (19791.0:t) (60261.0:t)

else if idx = angle_to_intruder then normalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = intruder_heading then normalize_t t (0.0:t) (6.2831853071800001231395071954466402530670166015625:t)

else if idx = speed then normalize_t t (650.0:t) (1100.0:t)

else if idx = intruder_speed then normalize_t t (600.0:t) (1200.0:t)

else t

let function normalize_input (i: input) : input =

Vector.mapi i normalize_by_index

let function normalize_output_t (o: t) : t =

normalize_t o (7.51888402010059753166615337249822914600372314453125:t) (373.9499200000000200816430151462554931640625:t)

predicate valid_input (i: input) =

(0.0:t) .<= i[distance_to_intruder] .<= (60760.0:t)

/\ .- pi .<= i[angle_to_intruder] .<= pi

/\ .- pi .<= i[intruder_heading] .<= pi

/\ (100.0:t) .<= i[speed] .<= (1200.0:t)

/\ (0.0:t) .<= i[intruder_speed] .<= (1200.0:t)

predicate valid_action (a: action) =

clear_of_conflict <= a <= strong_right

predicate advises_min_score (o: output) (a: action) =

valid_action a ->

forall b: action. valid_action b -> a <> b -> o[a] .<= o[b]

predicate advises_max_score (o: output) (a: action) =

valid_action a ->

forall b: action. valid_action b -> a <> b -> o[a] .>= o[b]

(****************************************************************************)

(* Property 1.

If the intruder is distant and is significantly slower than the ownship,

the score of a COC advisory is at most 1500.

*)

predicate intruder_distant_and_slow (i: input) =

i[distance_to_intruder] .>= (55947.6909999999988940544426441192626953125:t)

/\ i[speed] .>= (1145.0:t)

/\ i[intruder_speed] .<= (60.0:t)

constant nn: model = read_model "nets/onnx/ACASXU_1_1.onnx"

goal P1:

forall i: input.

has_length i 5 ->

let j = denormalize_input i in

valid_input j /\ intruder_distant_and_slow j ->

let o = (nn @@ i)[clear_of_conflict] in

(denormalize_output_t o) .<= (1500.0:t)

end

A more general formulation of all ACAS-Xu properties is available in acasxu.why. The corresponding neural networks in ONNX format are available under the folder /examples/acasxu/nets/onnx/.

4.1.4. Verifying the property with CAISAR¶

Once formalized, the specified property can be assessed by using CAISAR. We will use the open-source provers CAISAR supports for verifying properties of neural networks so to take advantage of the federating approach: whenever one prover cannot provide an answer, another may instead. In particular, we will use maraboupy and nnenum.

Assuming the prover locations are available in PATH, the following are the

CAISAR verification invocations using Marabou first and nnenum afterwords, for

verifying the ACAS-Xu property \(\phi_1\):

$ caisar verify --prover maraboupy acasxu.why -t 10m

[caisar] Goal P1: Valid

$ caisar verify --prover nnenum property_1.why -t 10m

[caisar] Goal P1: Valid

Note that the previous commands set the verification time limit to 10 minutes

(cf. -t option). If you obtain a HighFailure error, you can

troubleshoot it by increasing the verbosity of CAISAR’s output (cf. -v

option).

Under the hood, CAISAR first translates each goal into a compatible form for the targeted provers, then calls the provers on them, and finally interprets and post-processes the prover results for displaying them in a coherent form to the user.

maraboupy and nnenum are able to prove the property valid in the specified time limit.

In general, the result of a CAISAR verification is typically

either Valid, Invalid, Unknown or Timeout. CAISAR may output

Failure whenever the verification process fails for whatever reason

(typically, a prover internal failure).

4.1.5. Using more advanced WhyML constructs¶

Let us model the ACAS-Xu property \(\phi_3\), and verify it for the neural networks \(N_{1,1}\) and \(N_{2,7}\) [Katz2017].

From the modelling standpoint, the main evident difference concerns the desired

output property, meaining that COC should not be the minimal value. A

straightforward way to express this property is that the neural network output

is greater than or equal to at least one of the other four outputs. We can write

a is_min predicate that, for a vector o and int i, states whether

the \(i\)-th coordinate of o is the minimal value:

predicate is_min (o: vector t) (i: int) =

forall j: int. 0 <= j < 5 -> i <> j -> o[i] .<= o[j]

We want to check the same property for two different neural networks. Of course,

we could define a theory with two identical but distinct verification goals or

two entirely distinct theories in a same WhyML file. However, these two

solutions are not advisable in terms of clarity and maintainability.

Reassuringly enough, CAISAR provides all necessary features to come up with a

better solution. Indeed, we can use the read_model extension to define as

many symbols as needed to represent distinct neural networks.

In the end, the WhyML file property_3.why looks like this:

theory ACASXU_P3

use int.Int

use ieee_float.Float64

use caisar.types.Vector

use caisar.model.Model

constant nn_1_1: model = read_model "nets/onnx/ACASXU_1_1.onnx"

constant nn_2_7: model = read_model "nets/onnx/ACASXU_2_7.onnx"

predicate valid_input (i: vector t) =

let rho = i[0] in

let theta = i[1] in

let psi = i[2] in

let vown = i[3] in

let vint = i[4] in

(-0.3035311560999999769272506000561406835913658142089843750000000000:t) .<= rho .<= (-0.2985528118999999924732026627257144078612327575683593750000000000:t)

/\ (-0.0095492965999999998572000947660853853449225425720214843750000000:t) .<= theta .<= (0.0095492965999999998572000947660853853449225425720214843750000000:t)

/\ (0.4933803236000000036476365039561642333865165710449218750000000000:t) .<= psi .<= (0.5:t)

/\ (0.2999999999999999888977697537484345957636833190917968750000000000:t) .<= vown .<= (0.5:t)

/\ (0.2999999999999999888977697537484345957636833190917968750000000000:t) .<= vint .<= (0.5:t)

predicate is_min (o: vector t) (i: int) =

forall j: int. 0 <= j < 5 -> i <> j -> o[i] .<= o[j]

goal P3_1_1:

forall i: vector t.

has_length i 5 -> valid_input i ->

let is_coc_min = is_min (nn_1_1 @@ i) 0 in

not is_coc_min

goal P3_2_7:

forall i: vector t.

has_length i 5 -> valid_input i ->

let is_coc_min = is_min (nn_2_7 @@ i) 0 in

not is_coc_min

end

Note how the two verification goals P3_1_1 and P3_2_7 are clearly almost

identical, but for the nn_x_y logic symbol used, identifying respectively

the ACASXU_1_1.onnx and ACASXU_2_7.onnx neural networks.

We can then verify the resulting verification problem as before:

$ caisar verify --prover Marabou property_3.why -t 10m

[caisar] Goal P3_1_1: Valid

[caisar] Goal P3_2_7: Valid

$ caisar verify --prover nnenum property_3.why -t 10m

[caisar] Goal P3_1_1: Valid

[caisar] Goal P3_2_7: Valid

It is interesting to remark that, since Marabou does not support disjunctive formulas, CAISAR first splits a disjunctive goal formula into conjunctive sub-goals, then calls Marabou on each sub-goal, and finally post-processes the sub-results to provide the final result corresponding to the original goal formula.

G. Manfredi and Y. Jestin, An introduction to ACAS Xu and the challenges ahead, 2016 IEEE/AIAA 35th Digital Avionics Systems Conference (DASC), 2016, pp. 1-9, doi: 10.1109/DASC.2016.7778055